I would appreciate to have an open_movie_monitor application at hand that gets the max out of standard hardware, with little effort.

To what extend could applications like lives, xmovie, mplayer, gstreamer, pd+gem,… fill in? blender might be an option for some.

I was thinking about coding a prototype for XV + X11extensions in the next year, basically some successor of xjadeo in one way or another. Is anyone intending to do sth like this with openGL? - I need to do some reading before getting more specific, but gstreamer might be an option, too.

These are Ideas gathered from development notes that I made while coding xjadeo. I might come back to do proper research on some of those.. some can might be misleading or simply wrong so beware. comments are welcome anyway.

From the deprecated jack department:

jack_transport_info_t had →smpte_frame_rate and →smpte_offset;

but jack_get_transport_info() is only avail from a process callback

and passing this information along with the current-audio-frame requires

clients to implement drop-frame timecode, SMPTE parsing, etc.

The experimental (double)→audio_frames_per_video_frame suffers the same

drawback.

Why not export the SMPTE alike the bar,beat,tick in jack_position_t?

it might break binary compat,…

jack_position_t→frame_time and →next_time would provide an

alternative interface if they were rational (int) instead of (double).

The denominator would depend of the time-masters's fps. 1)

A completely different approach would be to share the session-configuration (fps,..) fi. via lash. this would yield less redundant data in the jack_position struct; but more redundant code everywhere else.. (unless there's a shared-lib or so: can midi++2 fill in?)

I have not gone thru all the posts in the jackit-devel archive yet… but I am missing some specs. for synchronizing video and jack-audio frames.

Well maybe it's not exactly jack's job to do that but providing a similar API as jack-MIDI for video would be great! ie: register a video frame to be displayed from/until a certain jack_audio_frame.

Here's some pseudo code of how I can imagine a real-time video jack

display to work: This is described from the viewpoint of the displaying app.

It's a user of the <jack_transport.h> and <jack_video.h> API

data structures and definitions

/* this struct will be cast/serialized to conform to

* proposed JACK_VIDEO_TYPE_H data types.. */

typedef struct {

enum colorspace;

int width,height;

int stream_id;

atom flags; /*< binary buffer flags: in-use/used/mapped/type

* some implementation variants might require

* an additional usage-counter... */

uint8 **vplane_buffer; /*BLOB(s) */

} vBUFFER;

atomic int64_t Vcnt; /*< counter is incremented each time the screen's

* Vsync wraps around.

* discuss: need for an array?

* interlaced displays, xinerama etc.

*/

typedef struct vQUEUE {

vQUEUE *next; /*< a Vcnt tagged [static] cache

* performs better than this linear list

* - but that depends on application and architecture.

*/

int64_t Vcnt_start, Vcnt_end; /*< timestamp in units

* of Vsync counts of this vQueue display(s)

* Vcnt_end is optional (see: flags)

*/

int flags; /*< extra info for this frame (eg.

* "display key-color after Vcnt_end")

*/

jack_nframes_t start, end; /*< redundant debug information */

vBUFFER *vframe; /* pointer to the actual video data or (NULL) */

} vQUEUE;

vQUEUE *start,*end; /*< some might prefer to use static instead or

* mlock(2)ed buffers ...? */

function callbacks as implemented in the player /* this function is linked to the video-output vertical sync */

(callback) vsync-raster-irq () {

- increment Vcnt;

- if (VQUEUE[head?].start >= Vcnt > QUEUE[head?].end)

flip buffers()

- flag the buffer in use and mark prev. buffer

}

/* this function is registered with

* jack_set_video_process_callback(NEW)

*/

(callback) jack_av_transport_advance () {

- query jack transport position (or recv as argument)

- query or cache "transport-advance-ticks" (currently: audio-buffer-size, later: video-ticks ?)

- calculate(*) Vcnt timestamps and video pts for frames until the next callback.

- check if those video-frames are available by get_jack_video_buffer(%)

- en-queue video frames.

- flush old entries from the queue (maybe do this in a separate thread..)

}

annotations

The calc(*) function can be anything from simple maths to fancy lookup

tables or sequencing code… a hot-swappable plugin system comes to mind

to assign video events to audio frames!

get_jack_video_buffer(%) could

jack video bufferan initialization routine would look like this:

{

- open jack + video-display

- async reset Vcnt from jack thread.

- measure frequencies

have a Vsync-irq callback store an array of monotonic jack usecs

and vice versa: store some Vcnt in jack_callbacks.

- if video_clk is faster than audio-clk or having a funny ratio

{ sync reset Vcnt.}

- initialize calc(*) routines with Vcnt offset

set known parameters (eg. video-source-framerate if the

jack-transport-master provides this info.)

}

there'll be some <rational_mathematics.h>, not to mention <helper_macros_to_manage_the_vQUEUE.h>, but

cat /dev/null > “NTSC_PAL-magic.h”

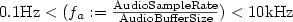

assuming that a jack_av_transport_advance() can be implemented as a jack_audio_callback(TM) it will be executed at:

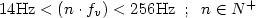

the Vsync callback function is triggered  times per second2).

times per second2).

I suggest that audio provides the fundamental clock, but video frame

time-stamping should be possible up to the smallest video time

granularity := GCF (vertical refresh rate of the display,

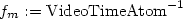

orig-movie-frame-rate). For variable framerate video streams  might be an appropriate value for

might be an appropriate value for

Does  need to satisfied? This opens a lot of A/V

sync issues. I'd propose different sync algorithms depending on ranges

or specific values of

need to satisfied? This opens a lot of A/V

sync issues. I'd propose different sync algorithms depending on ranges

or specific values of  implemented as frame[rate]

conversion Plugins3).

implemented as frame[rate]

conversion Plugins3).

Anyone to discuss impact of introducing “leap-frames” (a concept similar to latency_compensation) that would allow the video thread to offset/re-sync the audio. (..fast audio resampling, featured x-runs,.. :grin: just a thought)

How good is or will net-jack-jack-transport synchronization get.. IMO it is a a nice extension to the jack suite. I'd guess that network packet timestamps, CPU clocks and PCI latencies can cause quite some headaches…

Is a jitter > 10kHz is reasonable ? (do engineers measure jitter in the

frequency domain ? oh well. < 0.1 ms then - that's ~0.1% RMS for those

with an arbitrary unit calculator at hand ![]() or it should not be

worse than +-1.8 video-frames per 10 mins. some say that's bearable to

watch.

or it should not be

worse than +-1.8 video-frames per 10 mins. some say that's bearable to

watch. ![]()

Some related thoughts that should probably be commented on in the “future of jack” thread on jack-devel: