A while ago, Damien Zammit set out to reverse engineer the Firewire bus protocol of the Digidesign 003 Rack. The goal: write a Linux driver for the device.

While it became clear that the device uses an AMDTP ISO stream, playing audio using the standard format always added hissing noise to the sound. Long story short, Damien and I met on #jack shortly after x-mas'12 and started to analyze the situation.

The idea is to listen to communication between the PC and the sound-card when in a working environment and reverse engineer the used protocol. Damien has a system (using ProTools) that allows to properly use the device and also has access to a Firewire bus analyzer. So we're all set. Well, almost. We also need audio-files that can be played back with the test-system. Those sound-files should contain recognizable [bit] patterns and also satisfy the requirement of being able to cover the complete data space.

Quick tests with sox .dat files and Pd proved themselves cumbersome and not flexible enough. So I hacked up wavgen.c - a small tool that allows to create audio files with custom bit patters and generic audio channel combinations.

Back to the problem at hand: Why does this sound-card /hiss/ on neighboring channels when playing non-silent files with the current linux driver that is according to AMDTP specs.

We suspected some channel mapping issue at first, but the fact that the the hiss is a noise like signal does not support that hypothesis. So it's time to scientifically approach this problem and look at the raw ISO-stream data going over the wire when playing back single channel waveforms using ProTools where there is no hissing.

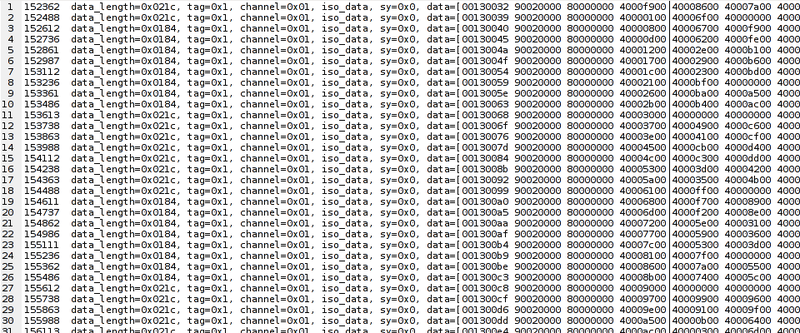

Looking at raw ISO-stream data does take a bit of getting used to. All data is transmitted in quadlets: a group of 4 bytes. Every packet (a line in Fig. 1 below) starts with two quadlets that specify stream-ID, timing, etc and is followed by audio+midi data. MIDI values are prefixed with 0x80xxxxxx while audio is identified by 0x40xxxxxx.

Figure 1: Raw firewire data dump. Excerpt

Figure 1: Raw firewire data dump. Excerpt

After some experimentation with various bit patterns using the hissing Linux driver, Damien noticed that it is only the middle byte of the 3 byte (= 24 bit) audio-data stream that is causing the problem. If said byte is non-zero, the channel that contains audio, as well as upward neighboring channels, produce hissing noise. When playing audio where said byte is static or zero, no noise was audible. Interesting.

A short time later we could confirm that the problem is indeed related to bits 8-15 (YYin 0xXXYYZZ) only and started focusing on that data byte alone: To that end, we continued with analyzing bus protocol data dumps of a mono 8 bit sawtooth signal (shifted by 8bits into the bit 8-15 range) played on channel 1 using ProTools where there is no hiss when doing so.

Yet, looking at the data bytes sent for playing the mono file, we could not make any sense out of it:

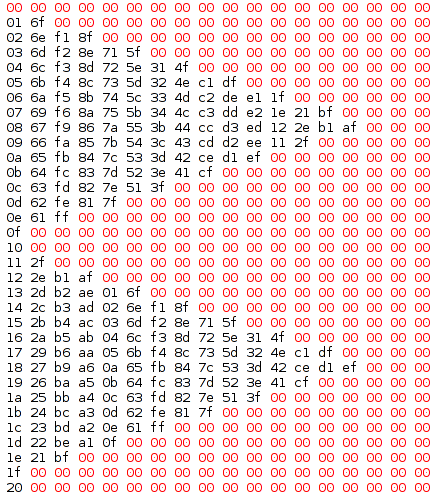

Figure 2: mono 8bit Sawtooth data on the Firewire bus, played with ProTools to the first channel of the 003R. Each column represents an audio-channel - there are 18 channels in total. The Y axis is increasing time.

Figure 2: mono 8bit Sawtooth data on the Firewire bus, played with ProTools to the first channel of the 003R. Each column represents an audio-channel - there are 18 channels in total. The Y axis is increasing time.

We clearly see the 8 bit sawtooth in the first column – Fig 20x00 thru 0x20; but why is there any data sent to the other channels? The fact that it looks like a triangle is just a coincidence, isn't it?

At first Damien confirmed that the pattern is reproducible between restarts of the ISO stream. Next, we wanted to know if the pattern is related to the channel number or if it only depends on the input signal itself: A sawtooth waveform lends itself perfectly for that case because it spans all available 8bit values serially.

So we proceeded to record raw ISO data of the same sawtooth signal played on channel 3, then channel 5 and eventually on both channels 1+5 simultaneously in sync. We were lucky: The same pattern occurred just shifted a few columns to the right depending on playback channel and we were able to conclude that the mysterious pattern is independent of the channel number and only depends on the input signal of bits 8-15 itself.

The algorithm that is used to combine the patterns when signals are played on multiple channels, however was still unknown, but we knew then that we had all the information we need to solve this riddle.

Meanwhile speculations ran wild: Is this a neat engineering trick to work around hardware issues? The number of affected neighboring channels depends on lower nibble of the 2nd byte. Does it address some kind of DC offset correction? In the latter case changing the frequency or DC offset should make a difference… no? Why would engineers to that? There must be a good reason for breaking a standard protocol… :)

Speculating did not help and eventually educated guesses all boiled down to the assumption that this must be some kind of obfuscation attempt to break interoperability, although we can't be sure of the actual motivation of the designers or engineers of the Digidesign device.

Having found a clear bit-dependent pattern, the next step was to understand how this pattern superpositions for multi-channel playback. To make things easier for us, we created a script to extract the relevant bytes from each raw data dump and align them nicely:

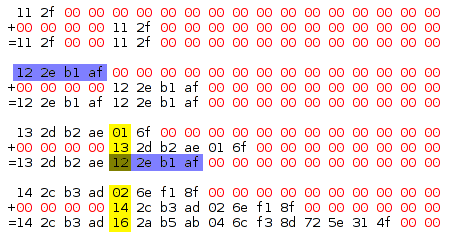

Figure 3: Superposition of multi-channel playback. First line in each block: play sound on on channel 1 only. Second line: play on channel 5 only. Third line: play same audio on channel 1 and 5, which must be a combination of the two. the + stands for some yet unknown operation/algorithm. see also caption of Fig 2.

Figure 3: Superposition of multi-channel playback. First line in each block: play sound on on channel 1 only. Second line: play on channel 5 only. Third line: play same audio on channel 1 and 5, which must be a combination of the two. the + stands for some yet unknown operation/algorithm. see also caption of Fig 2.

We assumed that it must be easy to evaluate the algorithm in real-time in both hardware as well as in software. Furthermore there must not be any data loss. This hints to the XOR operator. After looking at the data-dump for a while and testing some basic operators, XOR was indeed found to be the way the actual non-zero data is combined - see the yellow box in figure 3. but it did not explain the tail.

It is also puzzling that combining channel values can result in the actual channel-leak becoming shorter. For example the data for two channel playback of 0x13 spans only 8 channels, while the mono version of the same leaks until channel 10. When staring at the data for a bit longer, one will realize that the patterns following each XOR'ed byte are actually identical to the ones for the single values (see blue boxes in fig.3 – also compare to line 0x12 in fig.2).

The excerpt shown in Fig. 3 was the place where things started to fall into place and everything started make sense. This was likely due to the visual proximity of the 0x12 value: “hey, I've just seen this before”.

Elated by that discovery, formulating and confirming a hypothesis was a piece of cake.

For each frame of 18 channels, walk the samples for channels from 1..18. Always start with an empty - all zero - frame. Samples value are XORed with existing data. Non-zero samples (after XOR) introduce a tail: a fixed pattern depending only on the value after applying the XOR operator.

As first approach, the recorded fixed pattern tails were saved into a look-up-table (LUT). Basically Figure 2 was dumped into a large 255×18 array. This provided for quick verification of the hypothesis, but we deemed it unlikely that digidesign would use a ~4kByte LUT and the investigation moved towards further reducing complexity.

If you look at the LUT, you will indeed notice some patterns. e.g. the length of the tail – or salt as we eventually called it – depends only on the lower nibble of the data. Also, the lower nibble of the salt is always using the same alternating sequence from the end: 0xf,0x1,0xe,0x2,0xd,0x3,…. The upper nibble of the salt has a 1:1 relationship with the upper nibble of the data and the channel offset. compare Figure 2. for the 0x0X values, the 2nd column is always 0x6X, and the 3rd 0xfX.

Clearly there is some structure to the proceedings. Supposedly, - hardware wise - there is some hard-wiring going on.

You can follow the simplification by reading the source on github. At first the salt length and the lower nibble relationship was broken out.

This left us with a look-up-table of high-nibbles - see hib[16][15] in the source.

Except for the high-nibble 0x9, the sequence of each line in hib[] is identical; it just starts at different offsets and wraps around at the end. Working out the relationship of starting points and data was the last step to simplify the implementation.

I spent some time to find a mathematical solution to the algorithm - which is trivial for the lower nibbles - but eventually I decided to use simple lookup tables because of performance reason. Even though the actual computation of the length is only some bit-shifting, bit-masking and binary operation, getting it from a LUT requires less CPU cycles which is an important factor for low latency real-time audio.

The code is available in a git repository on github: https://github.com/x42/003amdtp. Eventually it may be merged into ALSA and Linux itself. ALSA firewire support itself is currently not mainline but in a staging branch at git://git.alsa-project.org/alsa-kprivate.git. So it can take some time..

Even with some small performance optimizations, the current implementation requires two if() branches for every audio sample to calculate and apply the salt. It is not a big deal on modern machines with GHz multi-core CPUs with branch-predictions etc,.. but it's still a place where things can be optimized further. Two possible branch points (one in digiscrt() and one in digi_encode()) per audio-sample can be considered a no-go for production code. YMMV. Yet the current implementation is a proof-of-concept and it fulfills its purpose.

Likely the whole algorithm can be implemented in a way that only requires binary bit operations but that is pending further investigation.

For now, Damien is happy to be able to use his Digidesign on Linux and the focus is on completing the driver code before delving into further optimizations.

If you do like solving riddles - as we do - any help is appreciated. Linux users who own Digidesign equipment will be grateful.

The current ALSA driver development itself is documented on Damien's site at http://www.zamaudio.com/?p=488.